|

Serves as the upstream for the Anaconda channels in most cases). Is the community-driven packaging effort that is the most extensive & the most current (and also The tool is both cross-platform and language agnostic, and in practice, conda can replace bothĬonda uses so-called channels to distribute packages, and together with the default channels byĪnaconda itself, the most important channel is conda-forge, which Using Conda ¶Ĭonda is an open-source package management and environment management system (developed byĪnaconda), which is best installed through It can change or be removed between minor releases. Note that this installation way of PySpark with/without a specific Hadoop version is experimental.

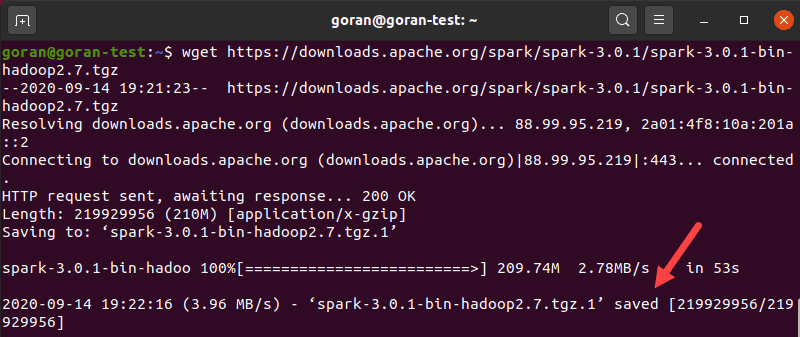

Without: Spark pre-built with user-provided Apache HadoopĢ.7: Spark pre-built for Apache Hadoop 2.7ģ.2: Spark pre-built for Apache Hadoop 3.2 and later (default) Supported values in PYSPARK_HADOOP_VERSION are:

PYSPARK_HADOOP_VERSION = 2.7 pip install pyspark -v

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed